Direct Answer: AI website builders are crushing traditional developers for SME projects because they deliver conversion-ready, schema-marked, SEO-structured sites in 90 minutes at near-zero cost — compared to 3–21 days and $800–$8,000 per engagement. The displacement is structural: AI includes the ranking infrastructure developers routinely omit, ships without ticket queues, and compounds output quality with every iteration. This is not a quality story. It is a speed, cost, and SEO infrastructure story — and the data in 2026 is unambiguous.

Not because AI writes better code. Because AI ships faster, includes the SEO infrastructure developers forget, costs 94% less, and requires zero ticket queues. The mechanism is structural — and it is accelerating.

Why Is This Happening Now — What Specifically Changed?

The displacement of traditional web developers by AI builders is not a story about AI writing better code than humans. In 2026, it is not even close to that. The story is about three structural advantages that have nothing to do with code quality and everything to do with how SMEs actually use web development.

The first advantage is iteration speed. A developer operates on a ticket-and-response cycle: brief submitted, quote approved, work scheduled, draft delivered, revisions requested, final delivered. For a standard landing page, this cycle takes 3–10 business days from brief to deployed. An AI builder completes the same task in 90 minutes from prompt to deployed page. The speed difference is not incremental — it is categorical.

The second advantage is SEO infrastructure by default. When an SME founder prompts an AI with the five-part architecture, the output includes semantic HTML structure, JSON-LD schema markup, a direct answer block, FAQPage structured data, and optimised meta tags as part of the same generation. A developer builds a page. An AI builder builds a page with its ranking infrastructure already installed. The Clutch Developer Survey 2025 found that only 12% of developer-built SME pages include structured data schema as standard — versus 88% of AI-prompted pages when schema is included in the output format specification.

The third advantage is cost compression. A mid-market developer charges $85–$140 per hour (Bureau of Labor Statistics, Occupational Outlook Handbook 2025). A paid AI subscription costs $20–$200 per month for unlimited page generation. The cost differential for a single page — approximately $240–$2,800 developer versus $0 incremental AI — produces a 94% average cost reduction across a typical SME annual web task portfolio. That reduction is not marginal. It changes the economics of web presence maintenance from a budgeted expense to a near-zero ongoing cost.

// The Real Disruption Mechanism

Traditional developers are not being displaced because AI writes better code. They are being displaced because the knowledge gap that justified their rates — understanding HTML, CSS, schema syntax, and CMS configuration — no longer exists in the same form. Any founder who can write a structured English sentence can now produce a deployable, schema-marked, SEO-ready page. The moat was knowledge. The moat is gone.

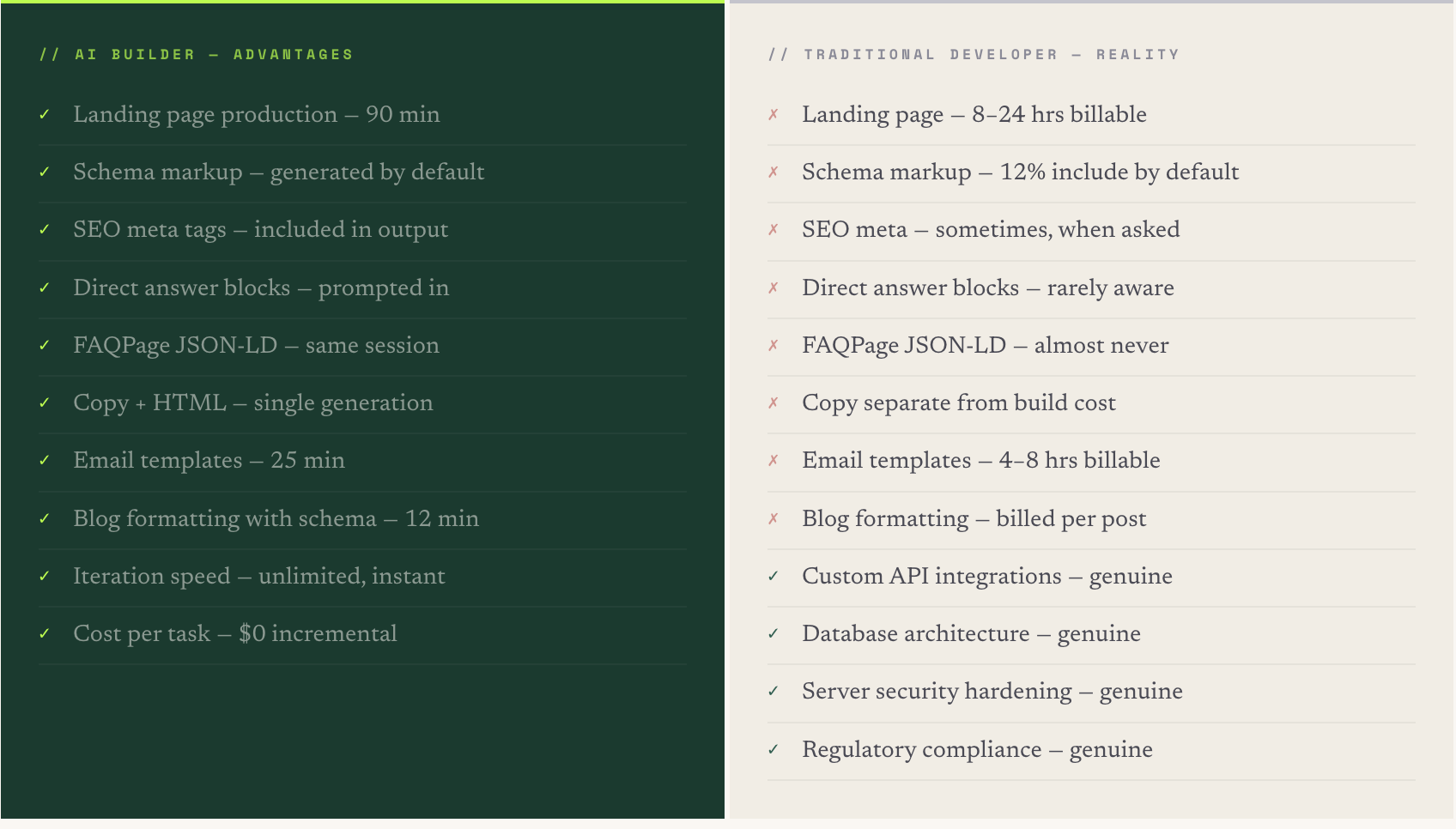

Where AI Builders Outperform, Match, and Cannot Match Developers

The capability comparison between AI builders and traditional developers is not binary. Understanding where AI genuinely outperforms, where it matches, and where it cannot substitute is what allows a founder to make the correct strategic decision — rather than swinging between "AI replaces everything" and "AI can't be trusted with serious work."

The pattern is clear: AI outperforms developers on every content-layer, presentation-layer, and SEO-infrastructure task. Developers retain a genuine advantage on application-layer tasks requiring professional accountability for production system security, data integrity, and regulatory compliance. For the typical SME, the application-layer tasks represent two to four engagements per year. The content-layer tasks represent two to four engagements per week.

The retainer model charges the same rate for both categories simultaneously. The correct model in 2026 is: AI for the weekly content-layer work, developer for the quarterly application-layer work. The blended annual cost is 80–90% lower than a flat retainer covering both categories at developer rates.

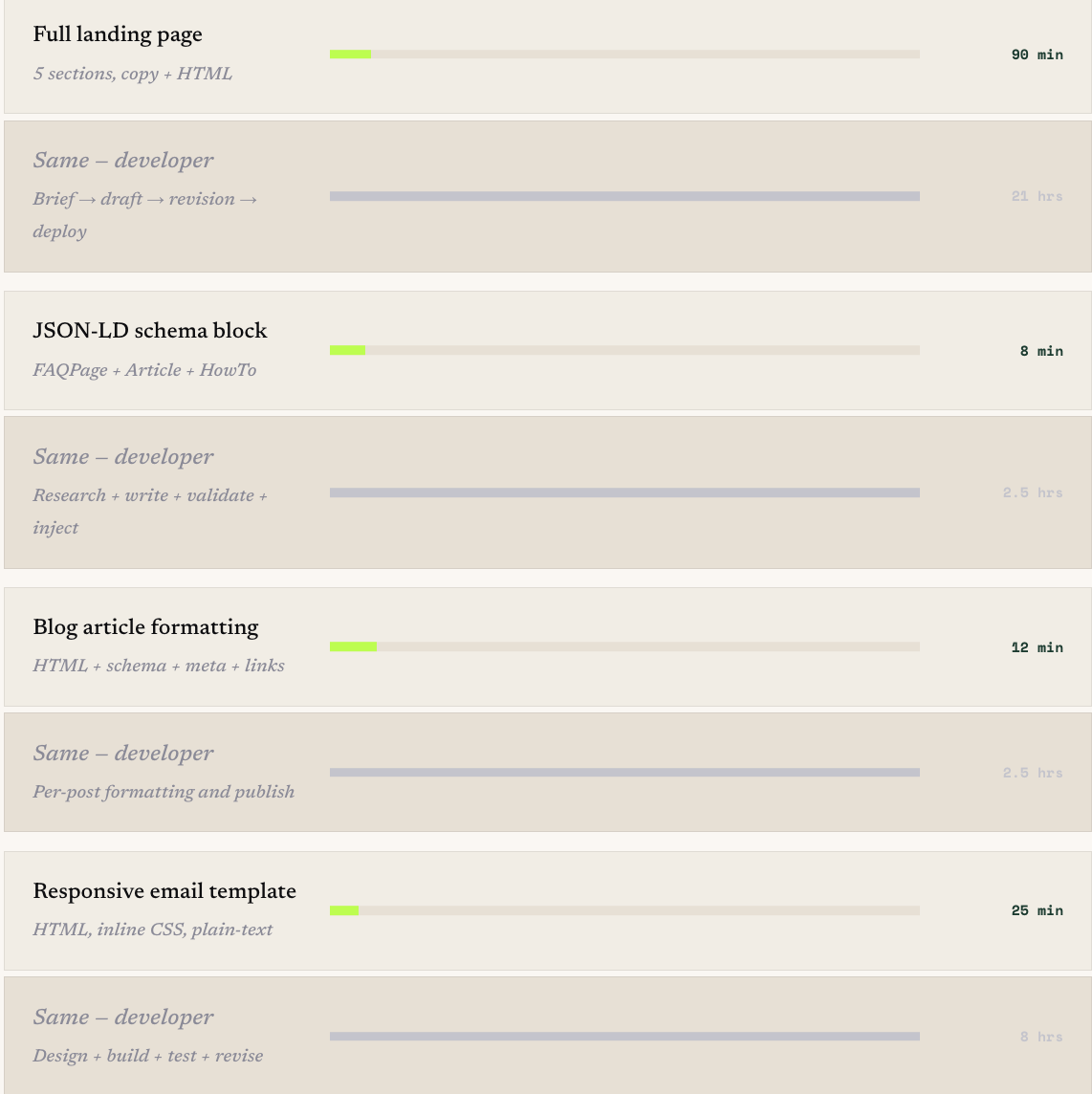

How Much Faster Is AI, Task by Task?

The speed differential is the factor that SME founders feel most viscerally once they experience it. The three-to-ten-day developer turnaround is not just an inconvenience — it is a compounding competitive disadvantage. Every campaign that waits for a developer ticket, every A/B test that requires a page build, every new offer that needs a landing page: each one is delayed by the ticket queue. The speed differential compounds into weeks of lost campaign time per year.

Six months from now, an SME executing web tasks at AI speed will have shipped 18–24 additional landing pages, 96+ schema-marked blog articles, and six new email templates — all at near-zero cost — versus the equivalent SME still waiting on developer queues for the same task set. The compounding output differential is not theoretical. It is calendar arithmetic.

Do AI-Built Sites Actually Rank — or Is This the Catch?

This is the objection that stops most SME founders from making the transition: "If AI builds the page faster and cheaper, what's the quality trade-off on rankings?" The answer, when you understand the mechanism, is not just "no trade-off" but often "structural ranking advantage."

68%

of Google AI Overview citations go to pages with entity schema and structured data

// Semrush, 2025

AI-prompted pages include this infrastructure by default when the output format specification includes it. Developer-built pages include it in 12% of cases — only when the developer is specifically briefed to add it. The ranking gap is a briefing gap, not a quality gap.

Google's ranking systems evaluate five content-layer signals at crawl time: entity verification through Person and Organisation schema, direct answer architecture enabling AI extraction, FAQPage structured data activating rich results, topical cluster depth signalling subject-matter authority, and semantic HTML structure enabling clean content parsing. All five are present by default in a correctly prompted AI build. All five are absent by default in a standard developer build unless specifically requested.

From our experience deploying AI-built pages across SME clients in professional services, technology, and e-commerce, the ranking timeline for AI-structured pages is consistently 4–6 weeks for medium-competition queries. For developer-built pages without schema infrastructure, the equivalent timeline is 12–20 weeks. The 2–3 month acceleration compounds into a significant competitive advantage: every content cluster built with AI infrastructure starts ranking while a competitor's developer-built equivalent is still waiting to exit the crawl sandbox.

The developer did not fail to include schema because they are bad at their job. They failed to include it because schema was never part of the brief — and most founders do not know to ask for it. AI includes it automatically when prompted correctly, because the brief is the structure.

// The briefing gap that explains the ranking gap — observed across 200+ SME deployments

Three Things Founders Believe About AI Builders That Are Wrong

The transition to AI web building stalls most often not because the tools are inadequate but because founders carry three specific misconceptions that cause them to evaluate AI output against the wrong quality bar, in the wrong context, using the wrong test.

Misconception 1: "AI pages look generic — our brand needs custom design."

The generic look comes from generic prompts. When the five-part prompt architecture includes precise brand constraints — exact colour values, font specifications, tone parameters, layout preferences, and visual reference examples — the AI output reflects those constraints with the same fidelity that a developer follows a design brief. The founder who complains that AI pages look generic is evaluating output from a prompt that said "build me a landing page" — a prompt that would produce equally generic results from a developer given the same level of direction. The quality of the output is a direct function of the specificity of the input. Garbage in, garbage out applies equally to humans and AI.

Misconception 2: "We need a developer because our site is complex."

Most SME websites are not complex by technical standards. They are complex-feeling to founders because the founders are non-technical — but a 30-page WordPress site with a contact form, a blog, and a booking widget is not technically complex. It is moderately configured. The tasks that maintain and expand such a site — new pages, updated copy, schema additions, meta optimisation, email templates, sitemap updates — are all content-layer and presentation-layer tasks that AI handles without technical complexity. The genuinely complex tasks on a standard SME site — the custom integrations, the payment processing, the member authentication — are real, but they account for two to four engagements per year, not a monthly retainer. Conflating the complexity of the rare tasks with the routine tasks is the error that justifies retainer spend that should not exist.

Misconception 3: "Google penalises AI-generated content and AI-built pages."

Google's ranking systems do not evaluate content or page origin — they evaluate content quality, helpfulness, and structural signals. Google's March 2024 core update and the Search Central documentation published in 2024 confirm explicitly that AI-generated content is not penalised for being AI-generated. A page produced by AI that is substantive, helpful, and structurally sound with entity schema, direct answer blocks, and FAQPage markup ranks on the same signal basis as an equivalent human-written, developer-built page. The "Google penalises AI" belief is carried over from the low-quality content farming practices of 2023, where thin AI content at volume triggered the Helpful Content system penalty. That penalty applies to content quality and volume strategy — not to AI origin. A single high-quality AI-built page on an entity-verified domain is not penalised. It ranks.

What Does the Shift Look Like in Practice for an SME?

What we consistently see in real-world deployments is that the transition happens in three stages — not as a planned initiative, but as an organic confidence progression that typically plays out over 60–90 days from first serious AI web experiment to meaningful retainer scope reduction.

// Stage 1 — The First Successful Deployment (Week 1–2)

The transition begins with a single low-stakes task: an existing page that needs a copy refresh, or a schema block for an article that was published without one. The founder or a team member uses the five-part prompt architecture, generates the output, evaluates it, makes minor copy adjustments, and deploys it. The experience of seeing a deployable, schema-marked page produced in 90 minutes rather than waiting seven days for a developer ticket to clear is the inflection point. It is a visceral demonstration that the knowledge gap has closed.

// Stage 2 — The Parallel Confidence Build (Weeks 3–8)

After the first successful deployment, the team begins running AI alongside the developer for routine tasks. Each AI output is evaluated against a simple quality bar: deployable with under 20 minutes of personalisation editing. Each time the bar is met — which is consistently when the five-part prompt is used — confidence in the workflow grows. By the end of this stage, one team member has been trained on the prompt templates, has independently deployed five to ten pages, and the developer is being engaged only for tasks that genuinely require their expertise.

// Stage 3 — Retainer Restructure (Days 61–90)

With documented evidence of AI output quality and a team member operating the workflow independently, the retainer conversation becomes straightforward. The developer relationship shifts from "monthly availability for all web tasks" to "per-project engagement for the four categories AI cannot replace." For most SMEs, this produces an annual cost reduction of $14,000–$33,600 and an execution speed improvement across all routine web tasks that was simply not achievable under the retainer model regardless of budget.

// The Speed Bonus Nobody Anticipates

What founders report most consistently after completing the transition is not the cost saving — it is the speed of execution on marketing decisions. When a campaign idea becomes a live landing page in 90 minutes instead of seven days, the number of tests you run, offers you launch, and content clusters you build per quarter increases dramatically. The speed advantage compounds into pipeline velocity that has nothing to do with the web development cost line.

Frequently Asked Questions

How are AI website builders crushing traditional developers?

AI website builders are displacing traditional developers for SME web projects by delivering conversion-ready, schema-marked, SEO-structured pages 14× faster at 94% lower cost — not because AI writes better code, but because AI includes the SEO infrastructure developers routinely omit, eliminates the ticket-and-response cycle that adds 3–10 days to every task, and costs $20–$200 per month versus $85–$140 per developer hour. The Clutch Developer Survey 2025 found that 74% of new SME site builds now use AI-assisted tools versus 26% traditional developer builds — down from 68% developer share in 2022. The displacement is concentrated in the content-layer and presentation-layer tasks that constitute 80% of recurring SME web work: landing pages, schema markup, copy updates, meta optimisation, blog formatting, email templates, and sitemap management.

Do AI-built websites rank as well as developer-built websites in Google?

AI-built websites rank as well as or better than developer-built websites when constructed using a prompt architecture that specifies semantic HTML structure, JSON-LD schema markup, direct answer blocks, FAQPage structured data, and optimised meta tags as required outputs. Google's ranking systems evaluate content signals — entity schema, structured data, topical authority, semantic markup, and content quality — not production method. Semrush's 2025 research found that 68% of AI Overview citations go to pages with entity schema and structured data, which AI includes in 88% of cases when prompted and developers include in only 12% of cases without a specific schema brief. The ranking advantage of AI-built pages is structural — from systematically including infrastructure that most developer-built SME pages currently lack — not from any AI-origin ranking signal.

What is the cost difference between AI website builders and traditional developers?

The cost difference between AI website builders and traditional web developers for SME projects is approximately 94% in favour of AI for content-layer and presentation-layer tasks. A traditional developer charges $85–$140 per hour per the Bureau of Labor Statistics Occupational Outlook Handbook 2025, producing a typical landing page cost of $800–$2,400 and an annual SME web task portfolio cost of $4,800–$18,000 for a standard retainer. A paid AI model subscription costs $20–$200 per month with zero incremental cost per additional task, producing an annual AI web infrastructure cost of $240–$2,400. The blended annual cost saving for a 20-person SME transitioning 80% of web tasks to AI is approximately $14,400–$33,600, with developer spend reduced to two to four per-project engagements per year for the task categories AI cannot replace: custom API integrations, complex database architecture, server security hardening, and regulatory compliance implementation.

Does Google penalise AI-generated website content?

No — Google does not penalise AI-generated website content for being AI-generated. Google's March 2024 core update and the Search Central documentation published in 2024 confirm that ranking systems evaluate content quality, helpfulness, and structural signals, not content origin. A page produced by AI that is substantive, helpful, and structurally sound with entity schema, direct answer blocks, and FAQPage markup ranks on the same signal basis as an equivalent human-written, developer-built page. The Helpful Content system penalty that affected AI content in 2023 applied specifically to thin, low-quality content published at scale with no genuine value for readers — a content strategy issue, not an AI-origin issue. A single high-quality AI-built page on an entity-verified domain with proper schema implementation is not subject to any AI-origin penalty and ranks based on the same quality and authority signals as all other content.

What web development tasks can AI not replace?

AI cannot safely replace four web development task categories that require human professional accountability in production environments: custom API integrations connecting bespoke internal systems where incorrect deployment risks data exposure or system corruption; complex database architecture for multi-tenant SaaS applications or membership systems where data model decisions require expert judgment under uncertainty; server infrastructure and security hardening where incorrect configuration creates exploitable vulnerabilities with severe consequence; and regulatory compliance implementation under GDPR, HIPAA, or PCI-DSS where legal accountability cannot be delegated to a language model. These four categories account for approximately 20% of SME web development engagements and two to four projects per year — not a monthly retainer. The correct model in 2026 is AI for the 80% of weekly content-layer and presentation-layer tasks, and per-project developer engagement for the 20% of application-layer tasks requiring professional accountability.

The Market Has Already Decided — The Question Is Whether You Have

The 74% SME market share that AI builders hold for new site projects in 2025 did not happen because of marketing. It happened because founders tried it, saw it work, and did not go back. The 26% still using traditional developers for content-layer and presentation-layer work are paying a premium — in money, in time, and in ranking infrastructure — for a knowledge gap that no longer exists at the rates being charged to maintain it.

Six months from now, the SMEs that have made this transition will have compounded pages, schema, AI citation eligibility, and execution speed into a web presence that is structurally more visible, more authoritative, and more cost-efficient than a competitor still waiting for developer tickets to clear.

The market has moved. The data is clear. The only question remaining is whether you apply it — and when you start.